Computer transistors are now as tiny as we can get them with current technology. As a result, computer scientists started looking for answers at the atomic and subatomic levels, an area is known as quantum computing. Rather than waiting for fully mature quantum computers to emerge, Los Alamos National Laboratory and other leading institutions have developed hybrid classical/quantum algorithms to extract the most performance and potentially quantum advantage from today’s noisy, error-prone hardware, as reported in a news article in Nature Reviews Physics.

Variational quantum algorithms employ quantum boxes to manage quantum systems while offloading much of the work to conventional computers, allowing them to focus on what they do best: solving optimization problems. Leaders in the industry are racing to build, deploy, and commercialize a viable quantum computer. Quantum computers would have the processing capacity to tackle problems that are now unsolvable for conventional computers, at least within a reasonable period.

“Quantum computers have the promise to outperform classical computers for certain tasks, but on currently available quantum hardware they can’t run long algorithms. They have too much noise as they interact with the environment, which corrupts the information being processed,” said Marco Cerezo, a physicist specializing in quantum computing, quantum machine learning, and quantum information at Los Alamos and a lead author of the paper.

“With variational quantum algorithms, we get the best of both worlds. We can harness the power of quantum computers for tasks that classical computers can’t do easily, then use classical computers to complement the computational power of quantum devices.”

Quantum computers employ quantum bits, or qubits, to crunch through operations, whereas traditional computers use ones and zeros. Quantum computers utilize ones and zeros in the same way as classical computers do, but qubits contain a third state called “superposition” that allows them to represent both a one and a zero at the same time.

Current noisy, intermediate-scale quantum computers contain 50 to 100 qubits, soon lose their “quantumness,” and lack error correction, which necessitates additional qubits. Theoreticians have been working on algorithms to operate on an imagined massive, error-correcting, fault-tolerant quantum computer since the late 1990s.

“We can’t implement these algorithms yet because they give nonsense results or they require too many qubits. So people realized we needed an approach that adapts to the constraints of the hardware we have an optimization problem,” said Patrick Coles, a theoretical physicist developing algorithms at Los Alamos and the senior lead author of the paper.

“We found we could turn all the problems of interest into optimization problems, potentially with quantum advantage, meaning the quantum computer beats a classical computer at the task,” Coles said.

Simulations for material science and quantum chemistry, factoring numbers, big-data analysis, and nearly every other application for quantum computers are among them.

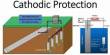

The algorithms are named variational because the optimization process, which is similar to machine learning, changes the algorithm on the fly. It adjusts parameters and logic gates in order to reduce the cost function, which is a mathematical expression that expresses how successfully the algorithm completed the task. When the cost function achieves its lowest feasible value, the issue is solved.

Quantum computers will alter the data security environment. Despite the fact that quantum computers will be capable of cracking many of today’s encryption systems, it is expected that they would develop hack-proof alternatives. The atoms in these computers are extremely delicate, and any disturbance produces decoherence.

The quantum computer estimates the cost function in an iterative function in the variational quantum method, then transmits the result back to the classical computer. After that, the classical computer modifies the input parameters and transmits them to the quantum computer, which repeats the optimization process.

Expect machine learning to speed exponentially if a stable quantum computer is built, potentially cutting the time it takes to answer a problem from hundreds of thousands of years to seconds. The review paper is intended to serve as a thorough introduction and educational resource for researchers who are just getting started in this subject.

The authors go into all of the algorithms’ applications and how they function, as well as obstacles, pitfalls, and how to avoid them. Finally, it considers the greatest chances for gaining quantum advantage on machines that will be accessible in the next years.