Otherwise the audio in the camera footage of the shaky body is unusually clear. Police officers began searching for the handcuffed man, who had fired shots inside the pizza parlor a few minutes earlier when an officer asked him why he was there. The man asks to investigate the ring of a pedophile. Incredible, the officer asked again. Another officer shouted, “Pizzagate. He’s talking about Pizzagate.”

In this brief, chilling interaction in 2016, it became clear that conspiracy theories, long locked in the edge of society, had moved into the real world in a dangerous way. Conspiracy theories, which have the potential to cause significant harm, have found a welcome home on social media, where forums free from restraint allow like-minded people to have conversations. There they can develop their theories and propose steps to combat their “revelation” threat.

But how can you say that the emerging narrative on social media is a baseless conspiracy theory? It has been shown that it is possible to distinguish between conspiracy theories and conspiracies of truth that use machine learning tools to graph the elements and connections of narratives. These tools could form the basis for an early warning system to alert authorities in an online statement that poses a threat to the real world.

The Cultural Analysis Group at the University of California, led by me and Vwani Roychowdhury, has developed an automated method for determining when conversations on social media reflect a notable reflection of conspiracy theories. We have successfully applied these methods to study the Pizzagate, COVID-19 epidemics, and anti-vaccination movements. We are currently using these methods to study quinoa.

Collaboratively built, for the quick formation

Deliberately hiding real conspiracies, people’s real-life activities are working together for their own slanderous purpose. In contrast, conspiracy theories are collectively constructed and developed openly. Conspiracy theories are deliberately complex and reflect an omniscient worldview. Instead of trying to explain a subject, a conspiracy theory tries to explain everything, discovering connections across the domains of human interactions that are otherwise hidden – for most reasons they do not exist.

While the popular conspiracy theorist’s image of the solitary wolf simultaneously photographing and interacting with the red string is surprising, that image no longer applies to the age of social communication. The conspiracy theory has moved online and is now a collaborative storytelling end-product product participants work with the parameters of a narrative structure: people, space, and the things in a story and their relationships.

The online nature of conspiracy theories provides researchers with an opportunity to develop their theories from the origins of these theories as a series of often isolated rumors and fragments of stories as a detailed description. For our work, Pizzagate has presented the perfect theme.

The development of Pizzagate began during the presidential election in late October 2011. Within a month, it was fully formed, including a full cast of characters from a series of otherwise unlocked domains: democratic politics, the personal lives of the Podesta brothers, casual family dining, and demonic pedophilic trafficking. These are fictional interpretations of leaked emails from the Democratic National Committee dropped by WikiLeaks in the last week of October 2016, otherwise the thread of the connecting story between the individual domains.

A narrative analysis

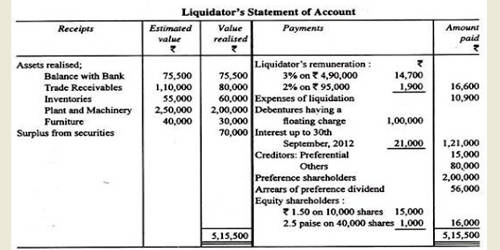

We have created a model – a set of machine learning tools – that can identify descriptions based on people, places, and sets of things and their relationships. Machine learning algorithms process a large amount of data to determine the categories of things in the data and then to identify which class of specific things belong. We analyzed 17,498 posts between April 201 from February 201 through to February 2018 on Reddit and 4 forums where Pizzagate was discussed. The model treats each post as a piece of a hidden story and sets out to unravel the narrative. The software identifies the people, places, and things in the posts and determines which the main elements which are the minor elements are and how they are all connected.

The model determines the main levels of narrative – in the case of Pizzagate, Democratic Politics, Podesta Brothers, Casual Dining, Satanism, and WikiLeaks – and how the layers come together to form the narrative as a whole. To ensure that our methods have produced accurate output, we compare the graph of the narrative structure produced by our model with the figure published in the New York Times. Our graphs combine with those images and offer even more subtle levels about people, places, and things and their relationships.