Our interactions with voice-based gadgets and services are becoming more common in today’s world. In light of this, researchers from the Tokyo Institute of Technology and RIKEN in Japan conducted a meta-synthesis to better understand how we perceive and interact with various robots’ voices (and bodies). Their findings have revealed human preferences, which engineers and designers may utilize to construct future speech systems.

Humans typically communicate through vocal and auditory means. Not only do we communicate linguistic information, but we also communicate the intricacies of our emotional states and personalities. Tone, rhythm, and pitch are all important aspects of our voice that influence how we are viewed. To put it another way, how we say things counts.

The influence of computer speech on user perception and interaction has been studied extensively.

We are broadening our interactions to incorporate computer agents, interfaces, and settings as technology progresses and the introduction of social robots, conversational agents, and voice assistants into our lives. Depending on the type of technology under investigation, research on these technologies may be found in the domains of human-agent interaction (HAI), human-robot interaction (HRI), human-computer interaction (HCI), and human-machine communication (HMC).

The influence of computer speech on user perception and interaction has been studied extensively. These studies, on the other hand, are dispersed over a variety of technologies and user groups, and they focus on various features of voice.

In this regard, a group of researchers from Tokyo Institute of Technology (Tokyo Tech), RIKEN Center for Advanced Intelligence Project (AIP), and gDial Inc., Canada, have compiled findings from several studies in these fields with the goal of providing a framework that can guide future computer voice design and research.

As lead researcher, Associate Professor Katie Seaborn from Tokyo Tech (Visiting Researcher and former Postdoctoral Researcher at RIKEN AIP) explains, “Voice assistants, smart speakers, vehicles that can speak to us, and social robots are already here. We need to know how best to design these technologies to work with us, live with us, and match our needs and desires. We also need to know how they have influenced our attitudes and behaviors, especially in subtle and unseen ways.”

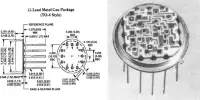

The team’s study looked at peer-reviewed journal publications and conference proceedings papers that focused on how users perceive agent’s voice. The source materials included a wide range of agent, interface, and environment kinds and technologies, with “bodyless” computer voices, computer agents, and social robots accounting for the bulk.

The majority of the reported user comments came from university students and adults. The researchers were able to identify and analyze trends in these articles, as well as make inferences about agent voice perceptions in a number of interaction scenarios.

Users anthropomorphized the agents they communicated with, and favored encounters with agents that matched their personality and speaking style, according to the findings. Human sounds were preferred above-synthesized voices. The use of verbal fillers such as pauses and phrases such as “I mean…” and “um” strengthened the engagement.

People liked human-like, cheerful, compassionate voices with higher tones, according to the poll. However, these preferences did not remain constant over time; for example, user preferences for voice gender shifted from masculine to more feminine sounds. The researchers were able to develop a high-level framework to categorize different forms of interactions across various computer-based technologies based on their findings.

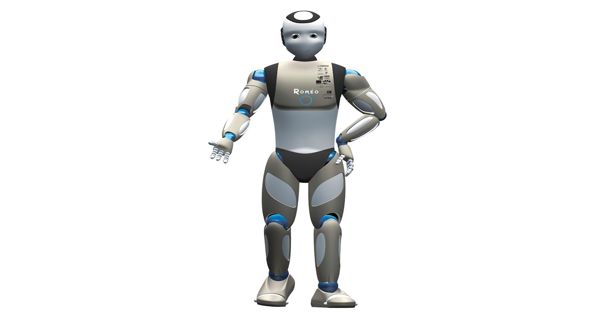

The influence of the agent’s body, or morphology and form factor, which may take the shape of a virtual or physical character, display or interface, or even an item or environment, was also studied. They discovered that when agents were embodied and the voice “matched” the body of the agent, consumers had a better perception of them.

The field of human-computer interaction, particularly voice-based interaction, is still growing and evolving on a regular basis. As a result, the team’s survey offers an important beginning point for researching and developing new and current technologies in voice-based human-agent interaction (vHAI).

“The research agenda that emerged from this work is expected to guide how voice-based agents, interfaces, systems, spaces, and experiences are developed and studied in the years to come,” Prof. Seaborn concludes, summing up the importance of their findings.